MCP 2.0: What's Changing for AI Agents and RAG Pipelines

MCP 2.0 introduces long-running Task workflows, server-initiated Triggers, and native streaming — while six major AI companies formalize MCP as the universal agent standard under the Linux Foundation.

The Model Context Protocol reached a major inflection point in April 2026. A new version with long-running workflow support is on the way, and six of the largest AI companies have formally standardized MCP as the backbone of agentic AI infrastructure. If you're building RAG systems or AI agents, both developments matter.

What MCP Is and Why It Took Off

MCP — the Model Context Protocol — is an open standard for how AI models connect to external tools, APIs, and data sources. Anthropic originally published it in late 2024.

The core idea: instead of every model having its own proprietary function-calling format, MCP defines a universal schema. You write your tool once as an MCP server. Any MCP-compatible model can then call that tool — whether it's Claude, GPT-5, Gemma 4, or a local Llama variant.

For RAG, this is particularly useful. Your vector database, SQL database, web search integration, and document store can each be exposed as MCP servers. The LLM decides which to call, in what order, based on the user's query.

MCP adoption exceeded most predictions:

- 110 million SDK downloads per month as of April 2026

- 10,000+ active MCP servers published publicly

- Supported natively by Claude, GPT-5, Gemini 2.5, and Gemma 4

What's New in MCP 2.0

The next version was announced at the MCP Dev Summit on April 2, 2026, and was developed collaboratively by Anthropic, Google, and Microsoft. Three headline additions:

1. Task Capability: Long-Running Autonomous Workflows

MCP 1.x is fundamentally request-response: the model calls a tool, the tool returns a result, the model continues. This works for single-step retrieval but breaks down for multi-step autonomous workflows that take seconds or minutes.

MCP 2.0's Task capability changes the model:

- A task is a unit of work that persists across multiple steps

- Servers can report progress back to the model during execution

- The model can pause, inspect intermediate state, and decide whether to continue or change direction

For agentic RAG, this is the difference between "search and answer" and "research a topic, synthesize findings from multiple sources, draft a response, verify claims, and return a polished result."

2. Triggers: Server-Initiated Actions

In MCP 1.x, the model always initiates. MCP 2.0 introduces Triggers — the ability for an MCP server to push events to the model.

Practical examples:

- A document store notifies the model when a new file is added that's relevant to an ongoing task

- A monitoring system triggers the model when an anomaly is detected

- A workflow orchestrator resumes a paused agent when a human approves a decision

This moves agentic AI from purely reactive (waits for a user query) to proactive (can respond to events in the environment).

3. Native Streaming, Retry Semantics, and Domain Skills

The 2.0 spec also adds production-grade reliability features:

- Native streaming: partial results flow back to the model during tool execution, not just at completion

- Retry semantics: standardized handling for transient failures, with exponential backoff built into the protocol

- Expiration policies: tasks and tool calls can have time limits

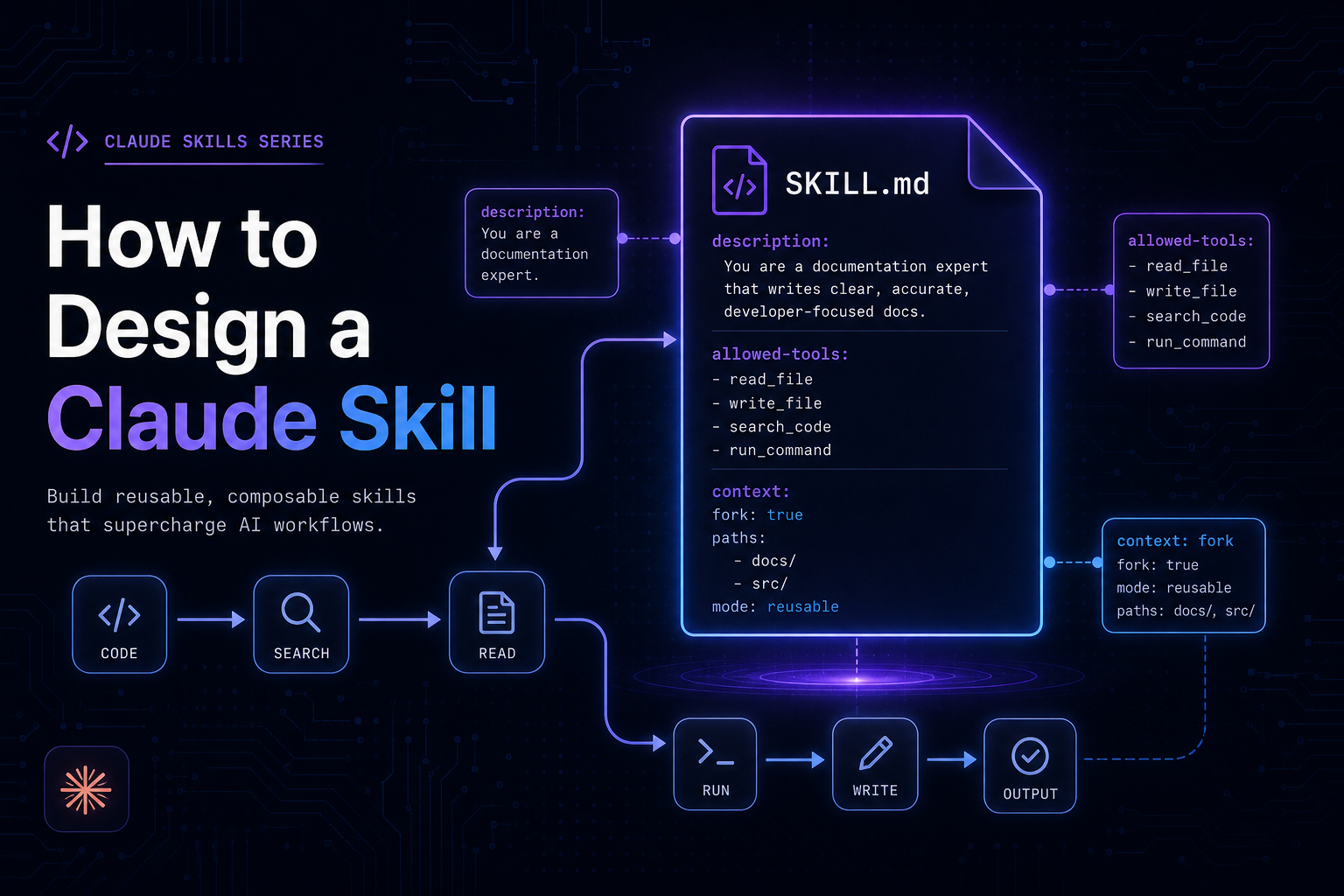

- Reusable domain skills: packages of MCP tools that can be shared and composed across agents

The Agentic AI Foundation: Why It Matters

In March 2026, Anthropic, OpenAI, Google, AWS, Microsoft, and Salesforce co-founded the Agentic AI Foundation (AAIF) under the Linux Foundation.

The foundation governs three things:

- MCP: universal protocol for agent-to-tool communication

- AGENTS.md (OpenAI): standard for agent capability declaration

- Goose (Block): open-source agent runtime

This is significant for a few reasons:

It removes the "will this be deprecated?" risk. MCP was already the de facto standard, but it was maintained by Anthropic alone. Putting it under a neutral foundation means no single vendor can deprecate or fork it unilaterally. Enterprise buyers, who were cautious about building on a single-vendor standard, can now commit confidently.

It consolidates the fragmented tool-use landscape. Before MCP, each major model had its own function-calling format. OpenAI had its tools API; Claude had tool_use; Gemini had its own format. Each required separate integration work. AAIF's mandate is to keep these converged.

It's the Kubernetes moment for agentic AI. The Linux Foundation's governance of Kubernetes gave enterprises the confidence to standardize on container orchestration. AAIF is aiming for the same effect for agent infrastructure.

What This Means for RAG Builders

Your Tool Layer Becomes Universal

If you've built MCP servers for your retrieval backends, they now work across every major model. You write your vector search integration once; it's callable from Claude, GPT-5, Gemma 4, and any future model that adopts the standard.

Agentic RAG Gets More Capable

MCP 2.0's Task capability unlocks multi-step RAG workflows that weren't practical before:

User: "Summarize all competitor pricing changes from the last quarter"

Agent (MCP 2.0 Task):

Step 1: Search CRM for competitor records → 15 companies found

Step 2: For each company, retrieve pricing pages from web tool → 15 calls

Step 3: Extract price changes via structured extraction tool → structured data

Step 4: Deduplicate and rank by significance

Step 5: Draft summary → return to userIn MCP 1.x, this required custom orchestration logic outside the protocol. In 2.0, the Task capability handles state, progress, and partial results natively.

The Standardization Dividend

As more tools publish as MCP servers, RAG systems get access to a growing ecosystem without custom integration work. The AAIF has already announced plans for a curated MCP server registry, similar to what npm did for JavaScript packages.

Migrating from MCP 1.x to 2.0

MCP 2.0 is backward-compatible. Existing 1.x servers will continue to work. To take advantage of new capabilities:

Capability | Required Changes |

|---|---|

Task (long-running) | Implement task lifecycle handlers in your server |

Triggers | Register event sources and push handlers |

Streaming | Update server to yield partial results |

Retry semantics | Declare retry policies in server manifest |

The Python and TypeScript SDKs will ship with migration guides and compatibility layers. If your current MCP integration is working well, there's no urgency to upgrade — the new features are additive.

What to Watch

- Late April 2026: Updated Python and TypeScript SDKs with MCP 2.0 support

- Q2 2026: AWS, Google Cloud, and Azure rolling out managed MCP 2.0 infrastructure

- AAIF MCP Server Registry: Public launch expected at Google I/O (May 20)

- Agent-to-agent communication spec: AAIF working group forming to standardize how MCP agents talk to each other — the missing piece for multi-agent RAG architectures