AI Digest: April 2026

Gemma 4 goes open-source, Meta drops Llama 5 with 5M token context, MCP 2.0 launches with the Agentic AI Foundation, OpenAI consolidates into a superapp, and Anthropic hits $30B run-rate.

April 2026 delivered back-to-back releases: two major open-weight models in one week, the next version of MCP, a massive product consolidation from OpenAI, and a revenue milestone from Anthropic that underscores just how fast this industry is monetizing. Here's what happened and why it matters.

Model Releases

Google Gemma 4: Open-Source, Multimodal, and RAG-Ready

Google released Gemma 4 on April 2, the most capable open model family the company has shipped. Four sizes cover everything from on-device inference to datacenter workloads:

- E2B / E4B: Optimized for Android devices and developer laptops

- 26B MoE: Speed-efficiency tradeoff, ranks #6 on the open-source Arena AI leaderboard

- 31B Dense: Highest quality, ranks #3 — outperforming models 20× its size

Key specs that matter for builders:

- 256K token context window on the larger models

- Native multimodal: text, image, audio, and video inputs out of the box

- Structured JSON output and function calling for agentic workflows

- 140+ language support

- Apache 2.0 license — commercial use, no restrictions

Deployment is available via Google AI Studio, vLLM, and Cloudflare Workers AI from day one. The model is built on Gemini 3 research, which makes the performance-to-size ratio genuinely surprising.

For RAG developers specifically, the combination of 256K context, native function calling, and local deployment options makes Gemma 4 one of the most practical open models to date.

Meta Llama 5: The Open-Source Giant Gets Bigger

Meta released Llama 5 on April 8, and the numbers are a significant jump from Llama 4:

- 600B+ parameters (up from Llama 4 Maverick's 400B)

- 5 million token context window — the longest in any publicly available model

- Recursive Self-Improvement capability: the model can identify its own reasoning errors mid-task and correct them

- Benchmark performance matches GPT-5 and Gemini 2.0 on major evaluations

The 5M context window is the headline. At that scale, an entire enterprise codebase, a year of Slack messages, or a full legal case file fits in a single prompt. The implications for RAG architectures are real: some retrieval steps become optional when you can just feed the whole corpus.

That said, running Llama 5 locally requires serious hardware. For most teams, this is an API model or a cloud-hosted inference story, not a laptop deployment.

Industry Moves

MCP 2.0: Long-Running Workflows and a Formal Standard

The MCP Dev Summit on April 2 announced the next major version of the Model Context Protocol, with three headline additions:

- Task capability: servers can now handle long-running autonomous workflows instead of single request-response cycles

- Triggers: servers can initiate actions proactively, not just respond to model requests

- Native streaming + retry semantics: production-grade reliability for agentic pipelines

Alongside this, six companies — Anthropic, OpenAI, Google, AWS, Microsoft, and Salesforce — co-founded the Agentic AI Foundation (AAIF) under the Linux Foundation. MCP is now the official universal protocol for agent tooling, sitting alongside OpenAI's AGENTS.md and Block's Goose project.

MCP SDK downloads currently run at 110 million per month. Standardization under a neutral foundation removes the "will this be deprecated?" risk that slowed enterprise adoption of proprietary tool-use APIs.

OpenAI's Superapp: One App to Replace Three

In late March, OpenAI announced it was consolidating ChatGPT, Codex, and Atlas into a single desktop superapp. CEO Fidji Simo cited product fragmentation as the driver — users were context-switching between tools for conversation, code, and computer use.

The same week, OpenAI also:

- Shut down Sora after six months — citing usage data showing video generation wasn't sticking as a standalone product

- Confirmed $25B+ in annualized revenue

- Acquired Astral, the Python tooling company behind

uvandruff - Positioned GPT-5.4 (released March 5) as the enterprise model for autonomous professional workflows

The Astral acquisition is the sleeper story here. Controlling the fastest Python package manager and linter gives OpenAI a credible path into developer infrastructure — beyond the model API layer.

Anthropic: $30B Run-Rate, 1,000+ Enterprise Customers

Anthropic disclosed that its run-rate revenue has surpassed $30 billion, up from $9 billion at the end of 2025. More telling: over 1,000 business customers are now spending $1 million or more annually.

The company also signed an agreement with Google and Broadcom for multiple gigawatts of next-generation TPU capacity, expected online starting in 2027. At this revenue trajectory, compute capacity is the binding constraint — not model capability or customer demand.

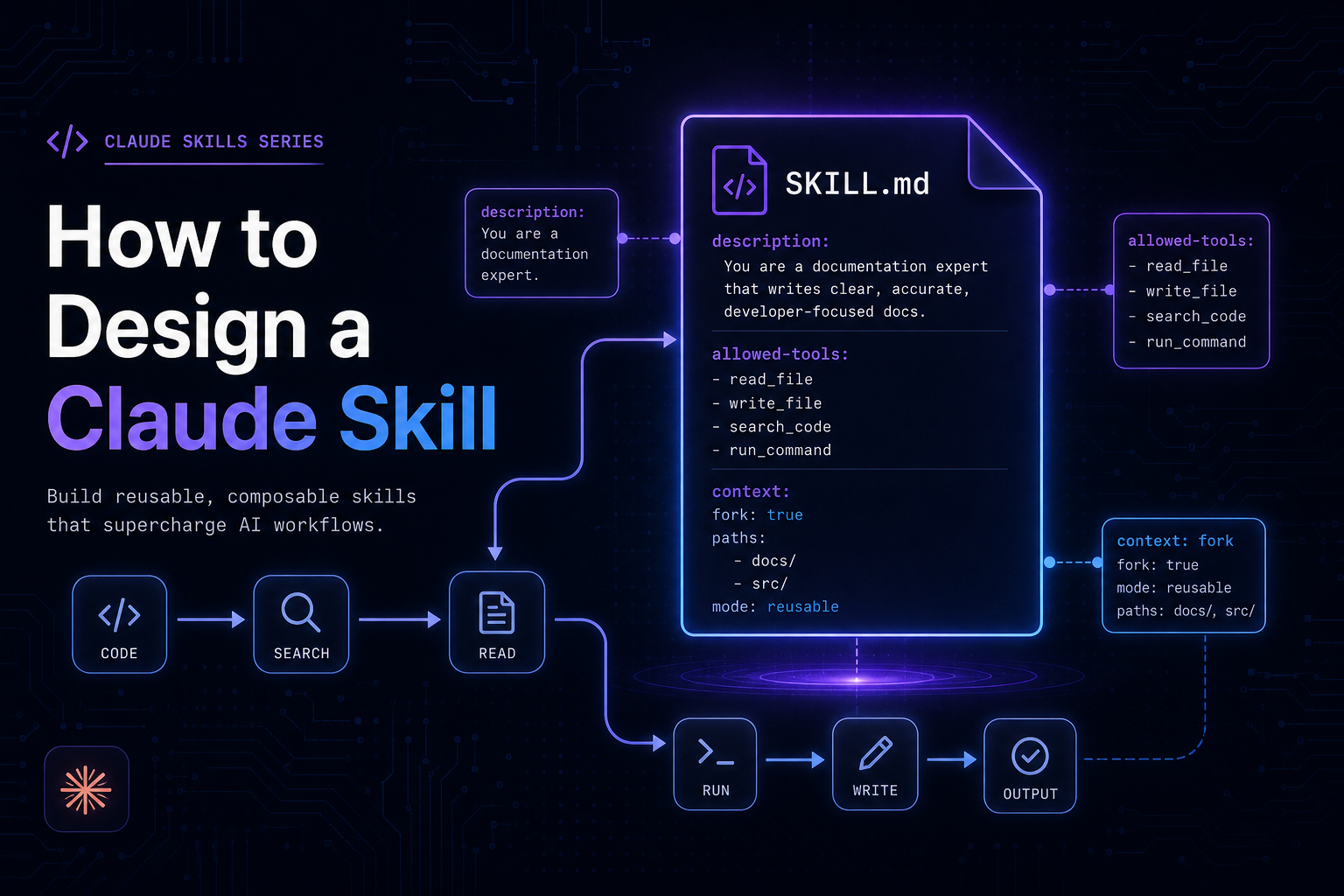

Claude Code, Anthropic's terminal-native coding agent, was cited as a primary growth driver and reportedly triggered a product acceleration response from OpenAI.

What to Watch

Next two weeks:

- Google I/O (May 20): Expected Gemini 3 Pro and further agentic platform announcements

- MCP 2.0 SDKs (Python and TypeScript): Final spec release expected late April

- EU AI Act enforcement: General-purpose AI model obligations take full effect for frontier models

- Llama 5 fine-tuning community: First wave of fine-tuned variants likely appearing on Hugging Face within days